Killer Robots and Robot Killers

The Genealogy of Autonomous Weapons Systems and What that Says About Today

Note: For those of you new here, or who do not know much about me, I’ve been involved in discussions and analysis of Autonomous Weapons Systems (AWS) both academically, practically and policy wise, for over a decade. I’ve testified as an expert to the United Nations Convention on Certain Conventional Weapons (CCW) way in the beginning of this journey, I’ve published academically, and I’ve lent my skills and brain to more than one type of organization over the years.

With this inaugural post, I want to draw attention to a few things that have been gnawing at me with regards to the continued debate on autonomous weapons systems (AWS). These little “niggles” to my brain have bubbled up lately, especially with the recent “Vienna Conference” on AWS hosted by the government of Austria last week. That conference repeated much of what has gone on over the past 10 years, so I won’t spend much time on it here. But the biggest item that I keep going over in my mind is the assertion that there is need for new legally binding “instruments” to regulate the development, deployment, and use of AWS, while simultaneously holding that any new law would not prohibit existing and already deployed weapons systems.

The reality is that States will not create laws that do not allow them to use the weapons they already have in their arsenals, and more practically, at the very onset of the debate about AWS, the Campaign to Stop Killer Robots explicitly stated that they were not concerned with existing weapons systems, only *new* weapons systems. After some pushback, the political maneuver was then to claim that “fully” autonomous weapons should be prohibited. “Semi-autonomous” weapons, like those in use today (and before) are not the issue. Their position has shifted a bit more over the years, however, where now *all* systems should be regulated by the concept of meaningful human control (MHC), and only certain systems should carve out prohibitions. I’ve got a variety of things to say about this, as I was one of the first authors of the concept of MHC (along with Article 36), but those are for another post.

Here is my challenge: if we go back to the very first instances of the use of automation (and dare I say “autonomy”) in warfare, looking especially at a WEAPONS SYSTEM, then I do not know how one can create a new legal rule that would regulate “new weapons systems” and not also regulate the entirety of existing weapons systems. Moreover, if all weapons systems are thus regulated, then I am still confused over the grounding of particular prohibitions (those that are not already included under international humanitarian law).

But to show you what I mean, let’s take an actual historical example of what I’m talking about. Let’s look at the German use of V1 “Flying Bombs,” or as Winston Churchill called it the “Robot Bomb Campaign,” during the end of WWII (roughly July 1944 – March 1945).

First, the German V1 could be deemed an “autonomous weapon,” if we want to call it that. It was a generally dumb cruise missile that, once launched and activated, could propel itself up to about 180 miles at a speed of about 400 mph. It possessed a rudimentary autopilot guidance system, which regulated its altitude, speed, an azimuth, gyroscopes, and a vane anemometer, as well as radio transmitters. They were not what we’d today call accurate, as they had a circular error probability (CEP) of about 19 miles in diameter. While the V2 rocket increased “accuracy” to about a 7-mile CEP, it wasn’t that great either.

So, we may say that they were autonomous in their abilities to fly to their destination without the guidance of a human operator, and once their vane anemometer counted down to zero, they nose-dived to a target area. “Selecting” targets wasn’t a capability; they fell where they fell. Their “dumb” status wasn’t any different than all the other munitions at the time, but what was different and prompted a major “game changer” status was their stand-off capabilities.

Churchill even noted in July of 1944 that “the flying bomb [V1] is a weapon literally and essentially indiscriminate in its nature, purpose and effect.” We will not here discuss some of the hypocrisy of Churchill’s claim (given Allied firebombing and such). But, the threat to British and Allied citizens was very real, and countering these menacing threats was of utmost importance to the British and American forces. While British pilots were earning their “aces” as “Robot Killers” during this time, it was actually the confluence of three technological advances that made the use of V1 and V2s far more useless to Germany and really pushed us to where we are today: autonomous weapons systems.

What were these? In no particular order, they were the: proximity fuse, the SCR-584 radar, the M3 “gun data computer,” and the subsequent M9 Gun Director. Putting all of these together created really the first “autonomous weapons system.”

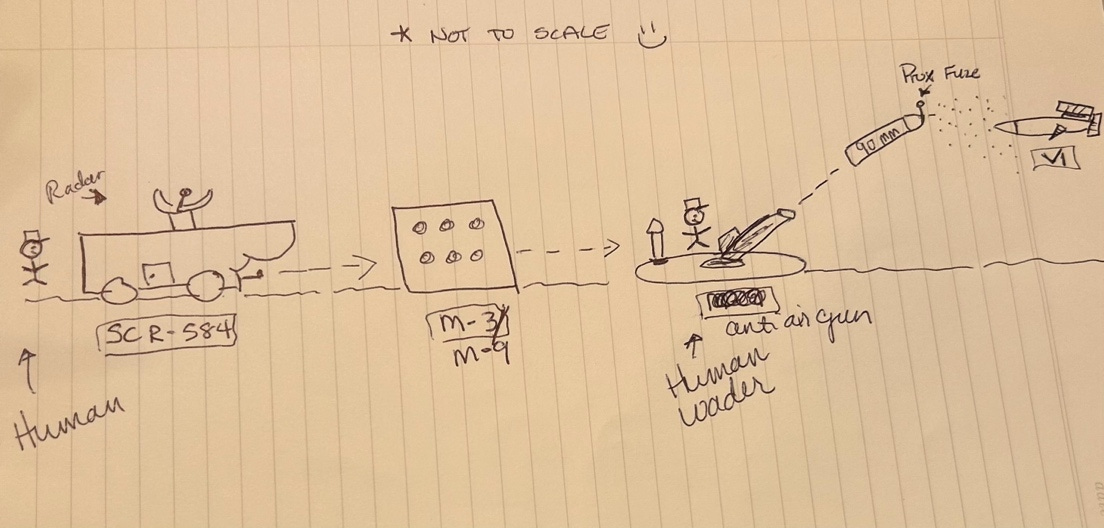

Here is a lovely little scribble I made while trying to put it all together in my head:

Without boring everyone too much, the gist of all of it is this:

The SCR-584 Radar was an automatic tracking, mobile ground-based system that located the direction and range of incoming V1s. This information was then passed to the M9 “gun director” computer which calculated wind, drift, earth’s rotation, air density, height and spot corrections, and a few others. Human operators loaded 90mm anti-aircraft shells outfitted with proximity fuzes into anti-aircraft guns. Once fired, the 90mm shells traveled to the area it was aimed at, and once the proximity fuze got into close enough distance to an object (the V1) it detonated.

So you have here an entire weapons system. The radar, the computer, the gun, the shell (with explosives) and the fuze. Importantly, you also have all the things that weapons systems require too (all the pesky things like personnel, trucks, and such). But the only place that humans were doing any work in that moment was to put these systems in place, turn them on, and then load the shells. They weren’t calculating the maths for where to fire, and they weren’t pressing any buttons to issue radar signals to initiate detonation.

Ironically, at the time, the V1s were referred to as flying robots, with countermeasures being “robot killers.” At least one article I’ve found from the period also calls the V1s “killer robots.” So we have killer robots and robot killers all happening 80 years ago.

Why does this matter for our discussion on AWS today? Well, it has everything to do with it, actually.

The suite of technological developments happening from the 1930s into the 1940s prepped the stage for their combined use WWII. WWII produced some of the biggest breakthroughs in science, and those breakthroughs are the grandfathers to current versions of technologies and weapons used by armed forces the world over.

The V1 was reverse engineered and formed the basis of many of the US (and others) military’s early “smart weapons” because they all rely to some extent on precision, navigation and timing ,and the ability to locate a target and attack it. The V1 by itself is just a big fast dumb bomb that can fly longer distances. It is the pairing of it with of sensors and communication links that give it new capabilities.

The basis, however, of all of these capabilities has to do with sensors, data, probability estimates, and the laws of physics. Weapons systems in deployment for the past 80 years have not fundamentally changed in how they work. Sure, we have gained advantages in size, weight and power (aka SWAP) – both through miniaturization as well as through maximalization (think thermonuclear warheads in the megatonnage). Yet the continuous calculations that were taking place on the M3/M9 and the continuous calculations that are taking place onboard a loitering munition are fundamentally the same. There are control algorithms to make it go from A to B, there are automatic target recognition algorithms for target identification, and there is some form of decision matrix, algorithm, or control that engages the system. (Note bene: for some of the earliest versions of automatic recognition systems see Automatic Terrain Recognition and Navigation or ATRAN).

The objection to me pointing all this out is likely to be that all of these old systems relied on computational logic-based systems and our “new” AI systems aren’t anything like that. These new AI systems with their deep learning, their “black boxes,” and their potential unpredictability are absolutely different than their grandfather systems. On the one hand, yes. That objection certainly holds true. The math is different and the control architectures are different. On the other hand, the objection doesn’t hold so well if we take it up a layer of abstraction to the idea that what is being *calculated* are all estimates and probabilities. We may claim there is a wider aperture of uncertainty for the new systems relative to the old, and that the new AWS systems’ behaviors are more complex. That is certainly fair and true.

But if both old and new work on mathematical estimates, they are both the same. If targeting with automatic target recognition algorithms with rule-based expert systems yield interpretable systems, but those systems are brittle and operationally costly (in both money and lives), then one may opt for different AI based systems that aren’t as brittle but have more uncertainty about exactly why they are doing what they do.

While the AWS debate gets sidetracked into the technological weeds at every chance it gets, ultimately what comes to the fore again and again is the notion of control. But war, that is, the conduct of hostilities, is a beast that doesn’t like to be tamed or controlled. The rules put in place over the last two hundred years have attempted to wrangle that beast as best as possible, to be sure. But arguments about control and war aren’t really then about technology. Technological arguments are discrete arguments (i.e., the artifact either has some quality or it doesn’t).

If the arguments about AWS were purely about technology, then the archeology and pedigree of today’s weapons shows that we are not in some new paradigm. Moreover, if the arguments were purely about technology, then every time a new level of technical accuracy is reached, the argument against its use is defeated.

Humans have not been “choosing” each and every target or taking every shot for quite some time. Close in Weapons Systems, Surface to Air Missile Defense, Ballistic Missile Defense, etc. are not used as weapons to require vast human deliberation. Even more “offensive” weapons (if there is really a distinction to be made between defense and offense) likewise can be used without needing a human to deliberate on target A versus target B even though target A and B belong to the same set S. The deliberation takes place at a different time. The deliberation takes place when the training data is selected, when the sensor suites are chosen, when the Commander in conjunction with her weaponeers, intelligence officers, and lawyers identify military objectives and match weapons to those objectives.

Control, then, as best as we can have it during armed conflict, is about creating structures of processes and rules for the conduct of war. We have a lot of those now. Command and Control is, in fact, predicated on this very idea.

So why all the fuss about AWS? Well, there is something that seems deeply troubling with delegating certain tasks to machines. I cannot here (in a blog) give you a defense of exactly what that is, but I can point to the very fact that militaries have for the past 80 years, fought with systems that let them cause very great destruction, at a distance, using sensors, data, and computational power and little to no fuss was made.

The decision to use these forms of power resides with humans, then as it does now. Choices to use a variety of AI-enabled means and methods have likewise already been made. Whether and to what extent using AI for targeting was also already made decades ago; that fact has been established. The question now is not whether to use it; it is now about negotiating the details and the price of how much to use it.

Insightful as always!

I’m curious, has your definition of AWS changed since 2015?

"Armed weapons systems, capable of learning and adapting their 'functioning in response to changing circumstances in the environment in which [they are] deployed,' as well as capable of making firing decisions on their own."

This debate always gets tripped up, among other things, around definitions.